Designing Deterministic High-Performance Computers

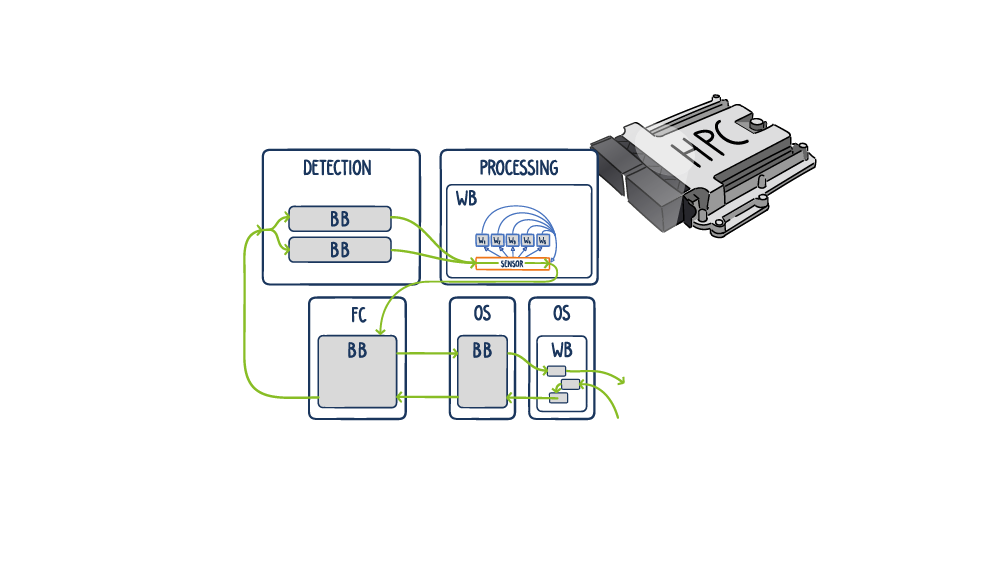

Modern HPC systems using the AUTOSAR Adaptive Platform on QNX or Linux are increasingly common. Architects striving to use model based approaches in order to build them in a reliable and predictable way, are facing new challenges:

HPCs are very hard to model in the manner traditionally used for systems using the AUTOSAR Classic Platform. Instead, models need to be adapted to a level of detail more fitting to this new technology. One proven method is using a black box/white box modeling concept.

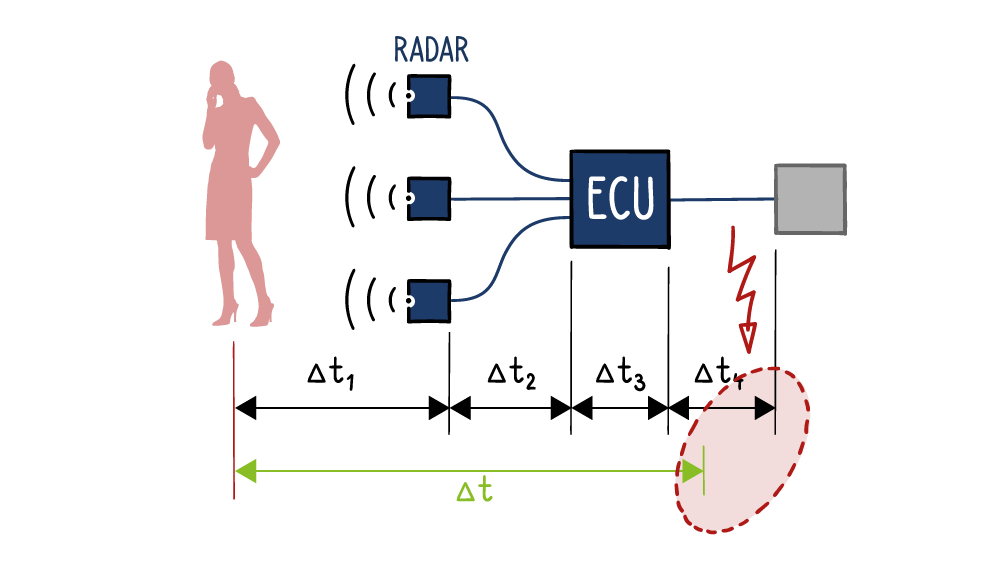

Timing optimization for radar ECU.

In complex detection systems with radar, lidar and cameras, a timely response of the output to an input is required. This does not have to be complicated. With our chronSUITE timing toolkit, even the most complex multicore systems can be analysed to identify the issues that cause end-to-end latencies to be exceeded. If you are facing such challenges, you can learn how the chronSUITE timing toolkit can help you here.

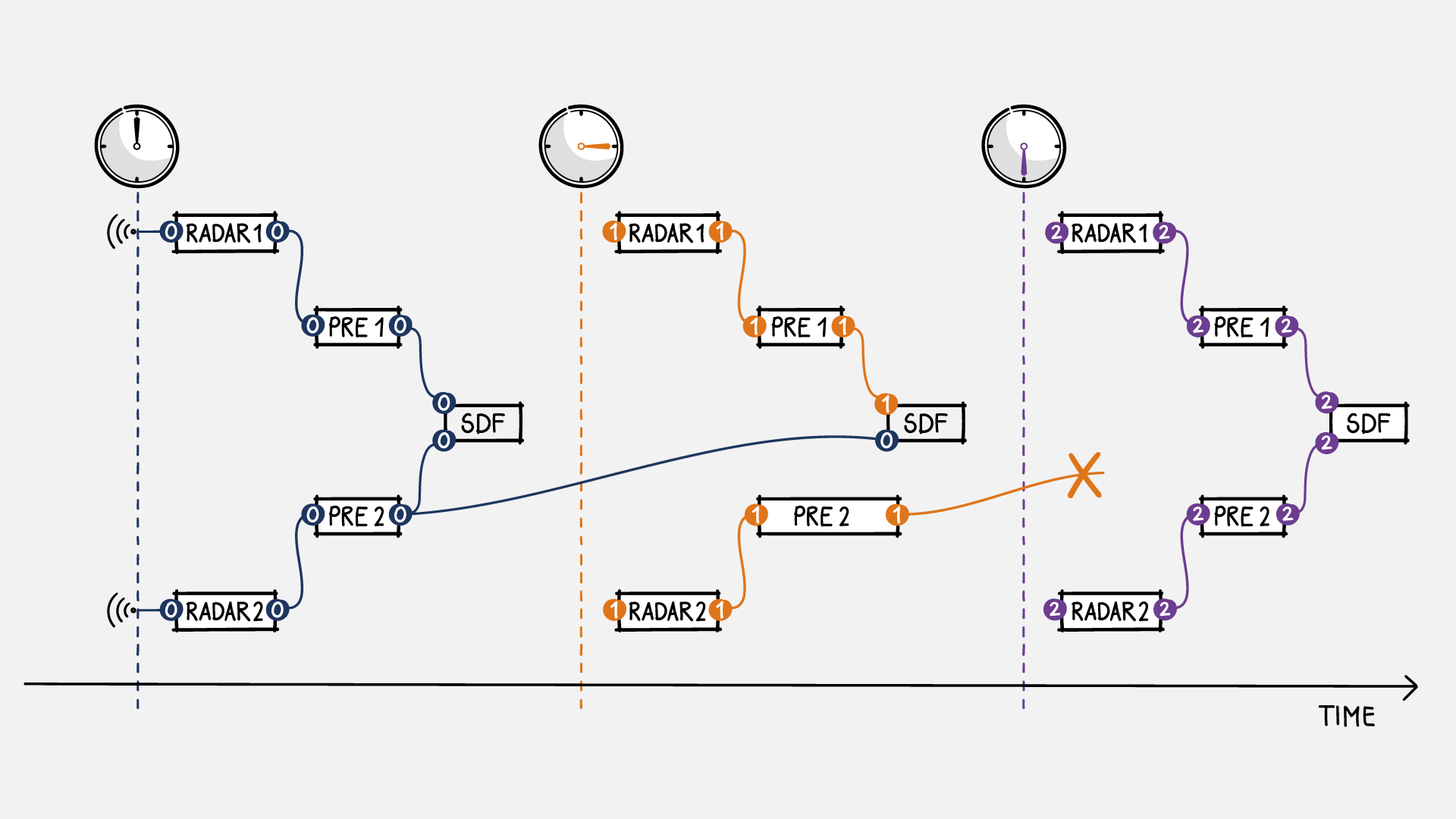

Sensor Data Fusion in High Performance Domain Controllers

Sensor data fusion (SDF) algorithms for autonomous driving place strict requirements on the data age and synchronicity of input sensor signals. If the deviation between different environmental sensors such as radar, lidar or camera becomes too high, SDF algorithms start to fail in capturing a consistent state of the environment. For example, it might happen that objects in the field of observation are not recognized by all sensors or appear on different space-time coordinates.

There are several possible causes for this error to occur: different sampling rates of the various sensors; same sampling rate, but unsynchronized sampling times; and diverging signal latencies between the acquisition of sensor data and their processing by the SDF algorithm. Accordingly, the maximum tolerance for the deviation of sensor signals with regard to data age is a very important design constraint. In our experience, many optimization cycles, taking into account the influences of hardware and software, are required to obtain a reliable and robust SDF design.

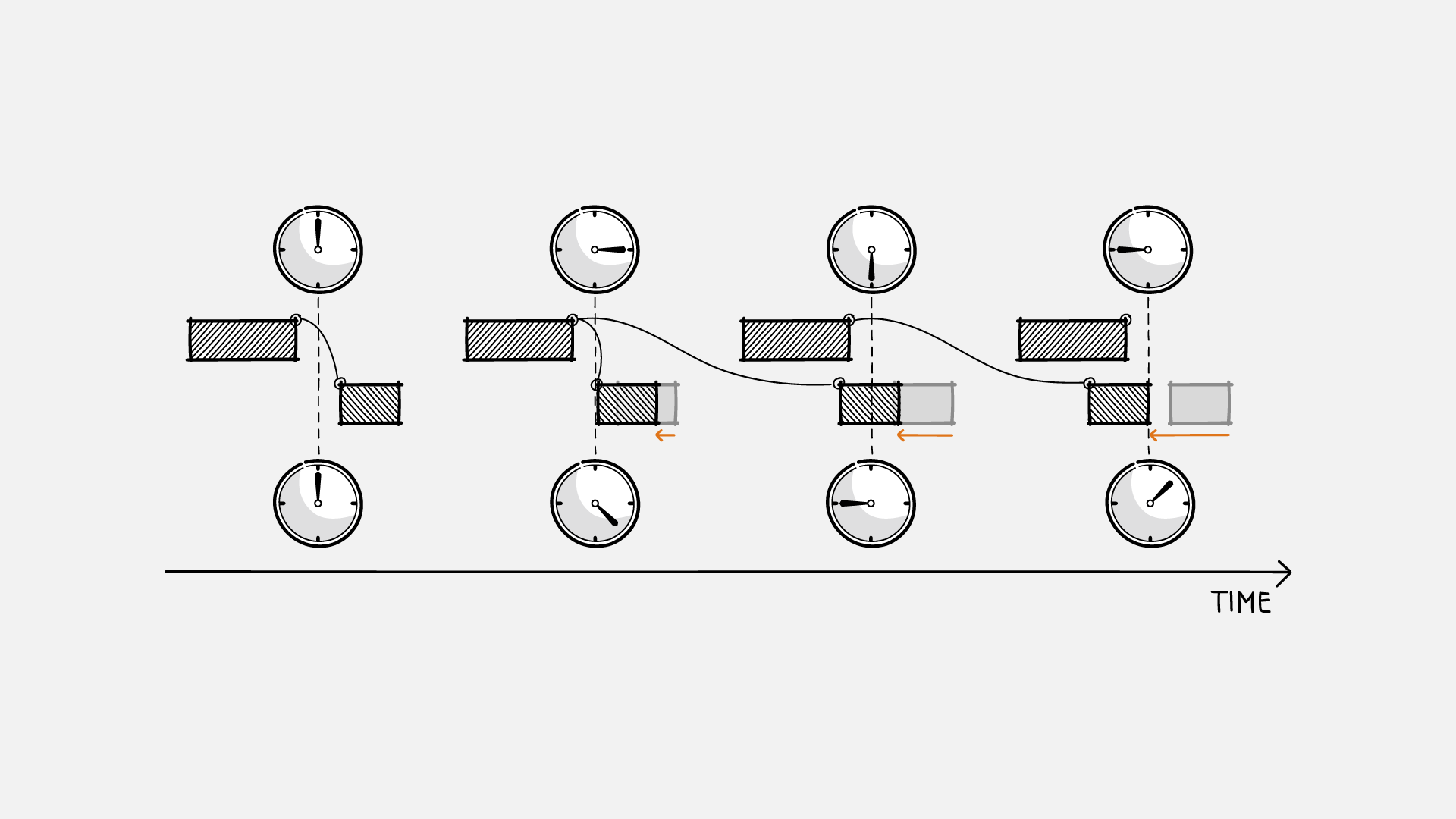

Understanding the Effects of Clock Drift and Asynchronicity

Functional correctness of hard real-time applications for ADAS and autonomous driving highly depends on the temporal consistency of sensor and control data. In such distributed systems, each microcontroller has its own physical clock used to keep time. Unlike ideal clocks, physical clocks have finite precision, and without active synchronization the different clocks in a system gradually drift apart over time. As a result, data may become inconsistent when their ages and temporal divergence are greater than the absolute or relative thresholds allowed by the application.

Sporadic system failures caused by timing errors are hard to detect and often missed by conventional integration testing. To understand the effects of clock drift and asynchronicity, a model-based approach has proven to be faster and more efficient: INCHRON tools for virtual integration provide the means to simulate and analyze these effects while verifying the achievement of design goals with respect to data consistency and safety.

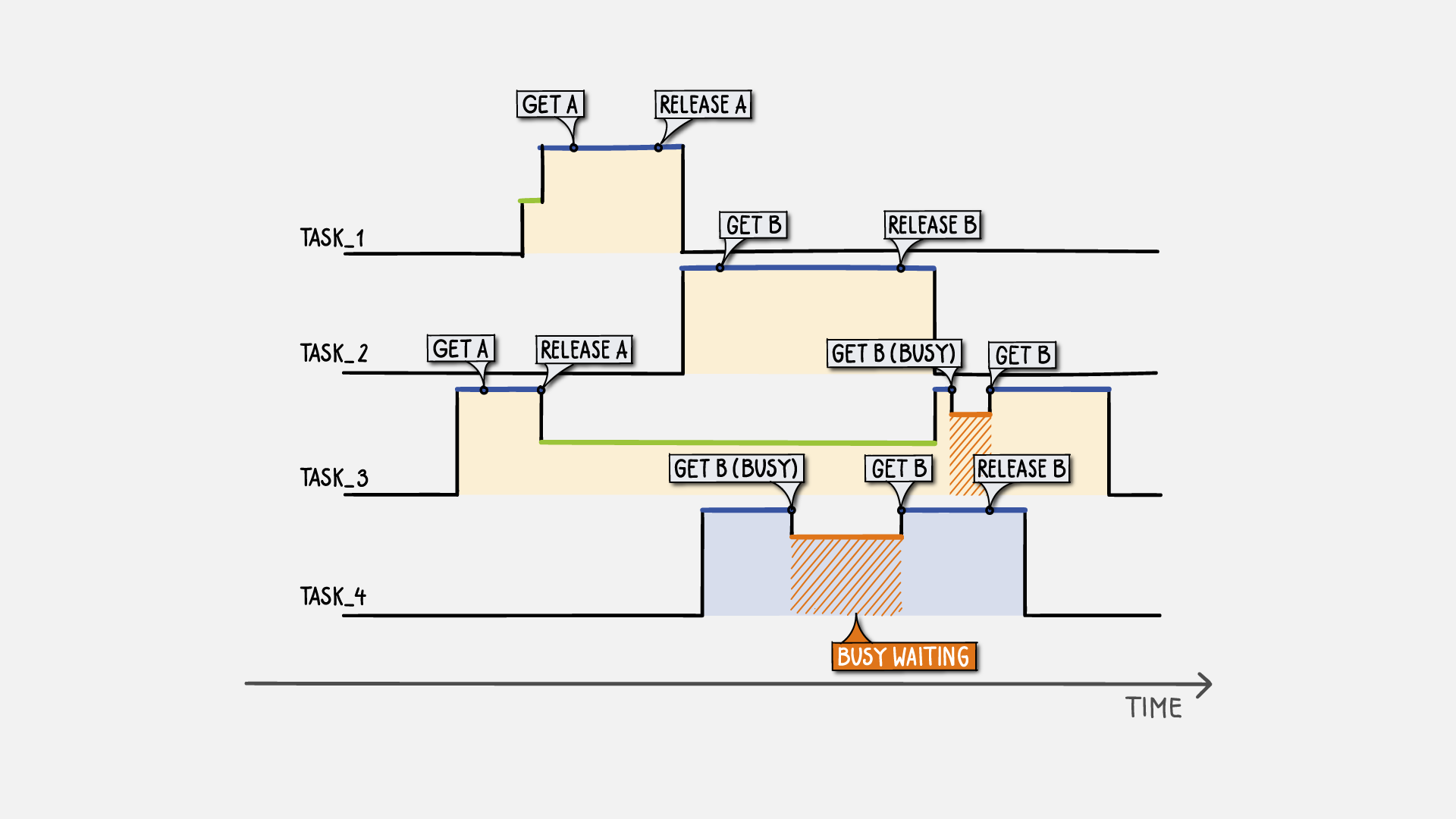

Migration to Multi-Core and High-Performance SoCs

The migration of legacy systems to multi-core is more than just moving to a new processor. A significant boost in performance and increased power efficiency is often expected without taking into account the added complexity of multiprocessing. Processes and functions must be executed in the right order while balancing the utilization of available processing units. A key issue here is locating critical code sections that, when executed in a parallel environment, may cause data inconsistencies and eventually result in system failure. In order to successfully manage the transition and avoid typical pitfalls, development teams need powerful analysis tools, which reach beyond the scope of conventional debugging and provide aspect-oriented trace visualization combined with interactive statistics and fully automated requirement evaluation.

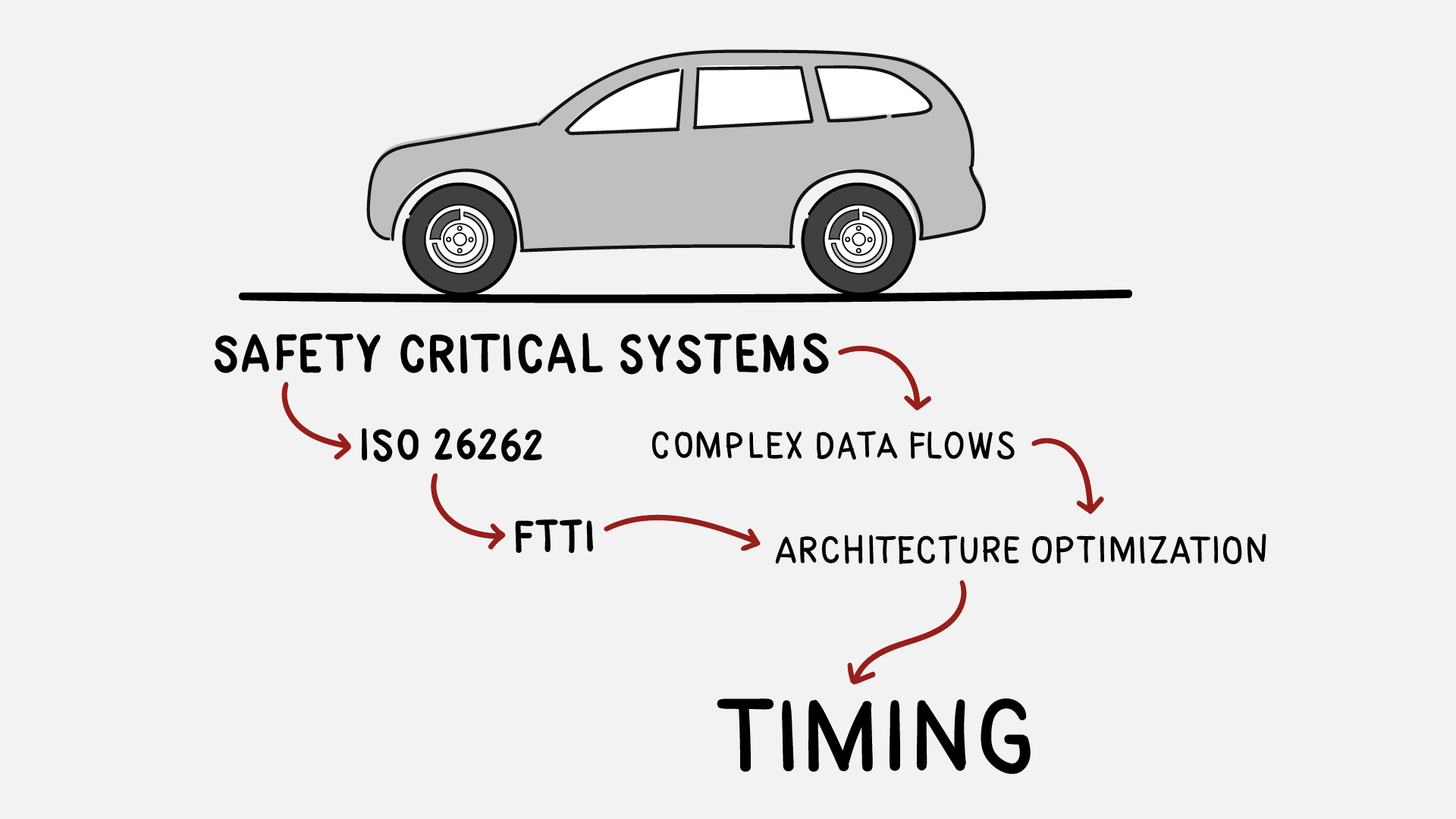

Model-Based Simulation of Safety-Critical Automotive Control Systems

Embedded systems highly contribute to the efficiency, safety, and usability of today’s means of transport such as cars and airplanes. Due to the possible hazards and risks involved with their operation, safety standards like DO-178C for avionics and ISO 26262 for automotive recommend the application of methods and tools regarded as state-of-the-art. Functional safety requirements imposed on hardware and software imply the detection of malfunctions and taking corrective actions, before hazards actually may occur. Among the key challenges is the prediction and verification of the system’s timing behavior. Experience from numerous automotive development projects shows that model-based methods and real-time simulation tools should be used at an early stage in order to effectively guide design decisions and achieve the safety goals set at the system level.